Most people treat prompts like magic words. You type something poetic, hit generate, and hope the model understands what you meant.

But when you slow down and look at it properly, a prompt is not very different from giving direction on a film set.

First the subject.

Then the environment it lives in.

After that the lighting, the camera position, the mood of the frame.

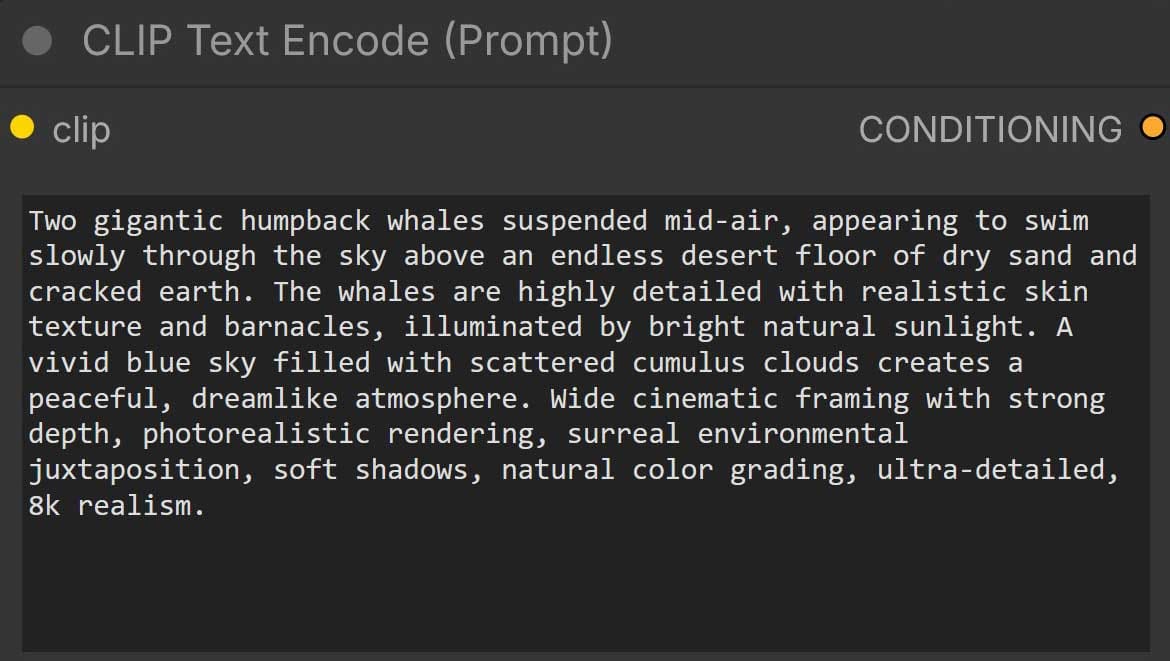

That is essentially what this prompt is doing.

Not poetry. Just instructions.

Two humpback whales.

Floating through a desert sky.

Bright sunlight.

Wide cinematic framing.

Realistic textures.

Soft shadows.

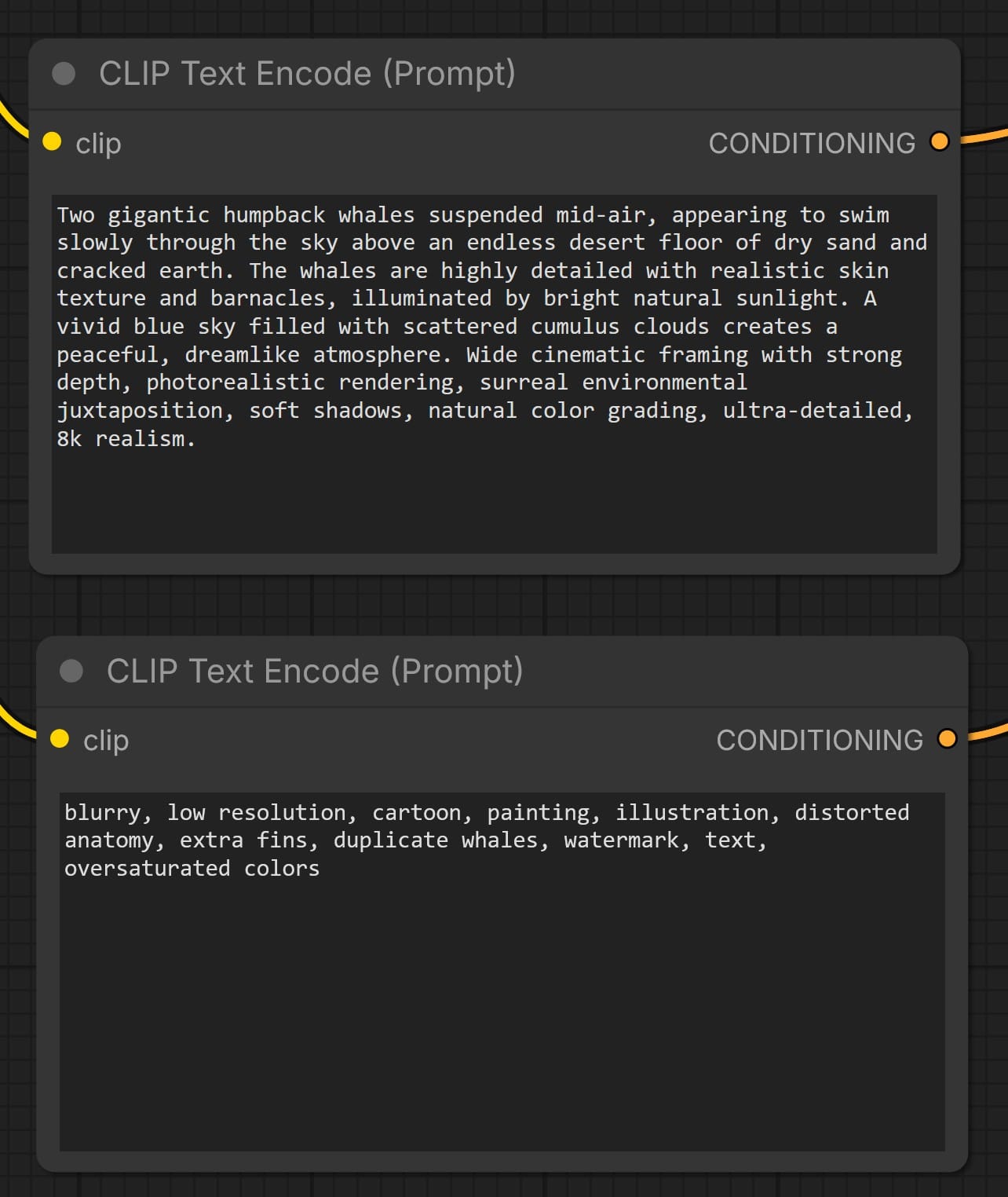

If you’re working with ComfyUI, it’s worth being descriptive. The system responds much better when you clearly explain what you want the image to become.

Then on the other side, you tell the system what you do not want.

No cartoon style.

No distorted anatomy.

No extra fins.

No duplicate whales.

No watermark.

I recommend using negative prompts too. They act like guardrails, helping the model avoid common mistakes.

Positive direction.

Negative direction.

If you think about it, this is not very different from compositing.

In compositing we also guide the image by defining constraints.

What belongs in the shot.

What does not belong.

What the lighting should feel like.

What the final image should communicate.

Different tools. The thinking behind them is surprisingly similar.

I suspect that a lot of the confusion around generative tools comes from people approaching them as magic instead of structure.

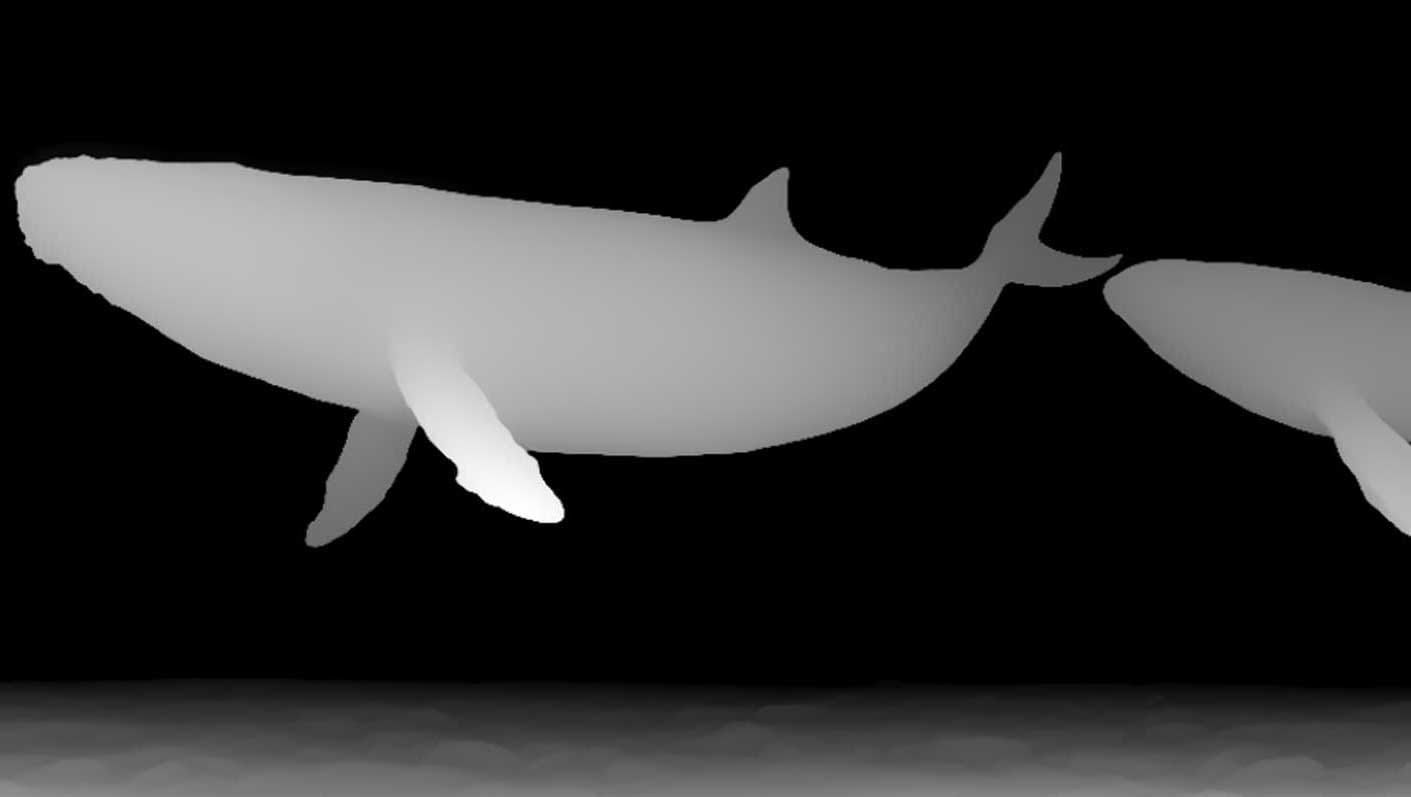

Right now I’m just experimenting. Small steps.

The whales move a little more each day.

And somewhere in the middle of that process, a course is slowly forming.

Discussion