Been diving deep into depth map generation with ComfyUI. The initial excitement around these tools is starting to settle into a more pragmatic assessment, which is exactly what we need.

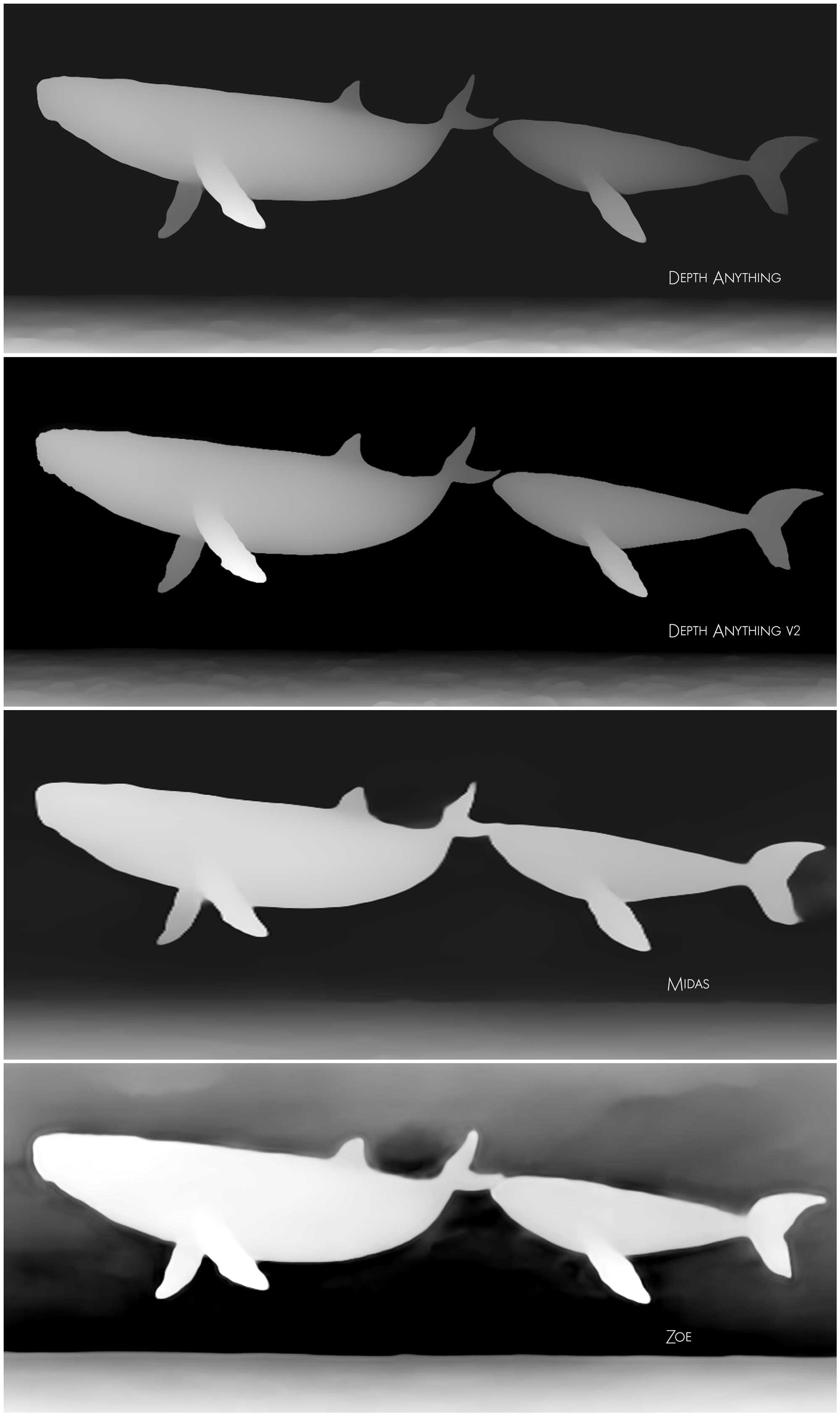

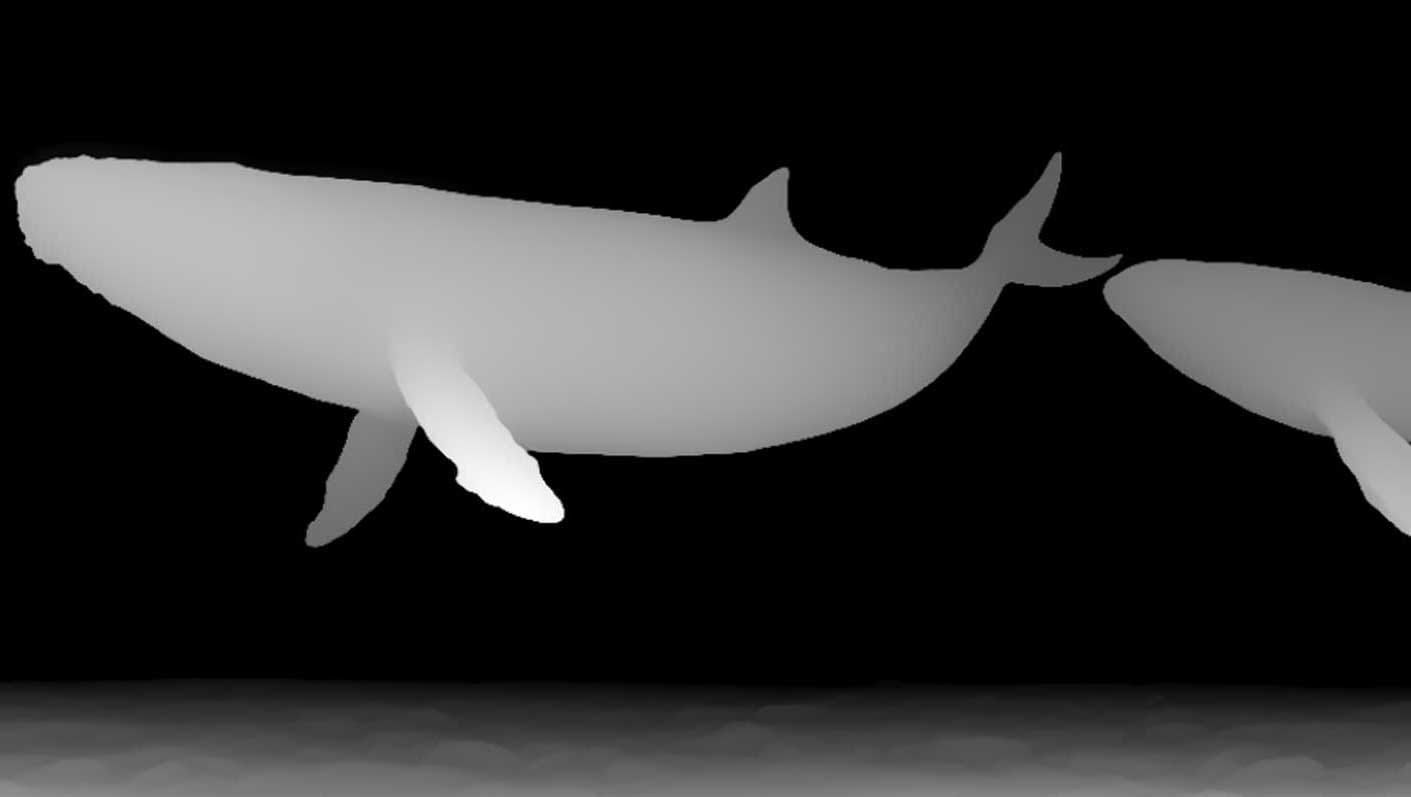

I've been running the same shot, that floating whale scene, through a few different models specifically designed for depth maps: “depth Anything,” “depth anything v2,” "MIDAS", and “Zoe Depth Anything.”

The goal? To see how they translate, and to understand the limitations of each approach.

“Depth Anything v2” is proving surprisingly robust. It consistently produces a decent depth map, and frankly, it’s the most visually convincing out of the box. It's quickly becoming my go-to for initial explorations. The results are… well, they look right.

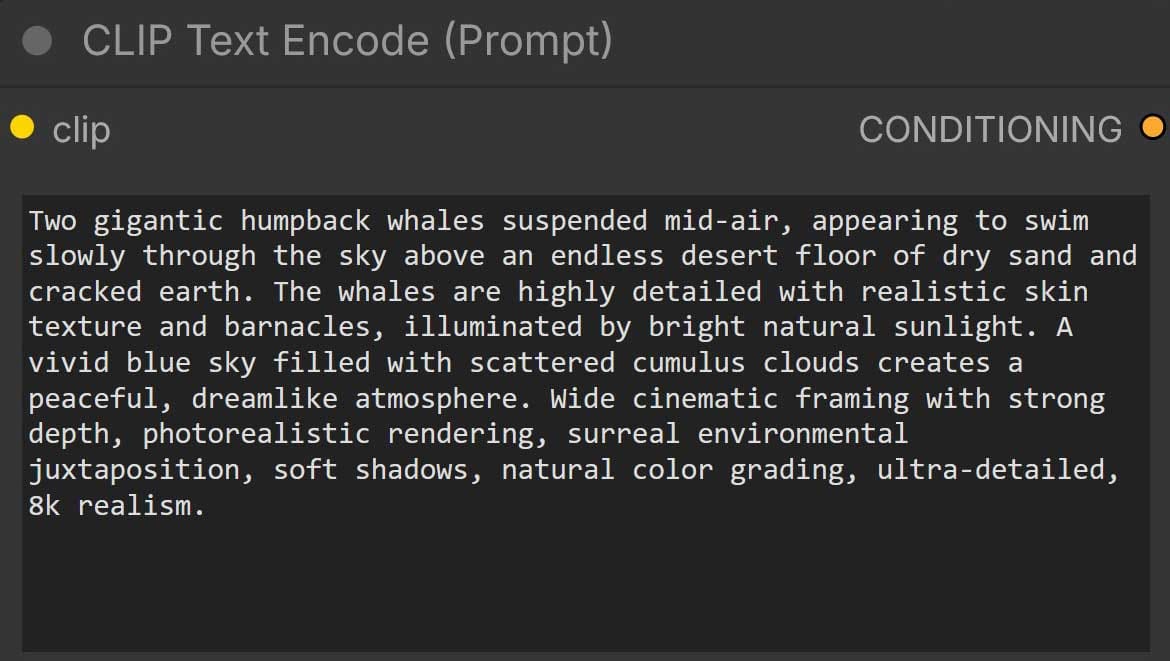

However, there’s a significant caveat. And this is where things get frustratingly technical. All three models output an 8-bit depth map.

Now, I know 8-bit has its place, but when you're talking about VFX and compositing, especially with anything beyond basic matte painting, you need that extra dynamic range, a 32 bit or even a half-float (16-bit) depth pass. It’s the difference between subtle shading nuances and just… gray.

Without that higher bit depth, it's incredibly difficult to integrate these maps into a traditional pipeline. The loss of detail is immediately apparent when you try to use them for things like depth of field, volume calculations, or even just layering with other elements.

I’m starting to realize that a lot of the “wow” factor around these generative tools is built on a foundation of… well, a simplified reality.

It's a crucial distinction: generating something visually resembling depth is one thing; creating a truly useful depth pass for professional workflows is another entirely.

Discussion